This article contains information about postgresql.conf file in PostgreSQL. If you want to change Postgresql configuration parameters, you should read the below article.

Postgresql change configuration parameters.

What is postgresql.conf?

The postgresql.conf configuration file basically affects the behavior of the instance. For example, allowed number of connections, database log management, vaccum, determining wal parameters, etc. Of course, all this has a default value when the database is installed, but we can change these values to better suit the workload and working environment. The most basic way to set these parameters is to edit the postgresql.conf file.

postgresql.conf Location

postgresql.conf file is normally stored in the $PGDATA directory. By default, the postgresql.conf file is loaded in the init phase. If we want to find the full path of the postgresql.conf file using the command, we use the following command.

1 | select * from pg_settings where name='config_file'; |

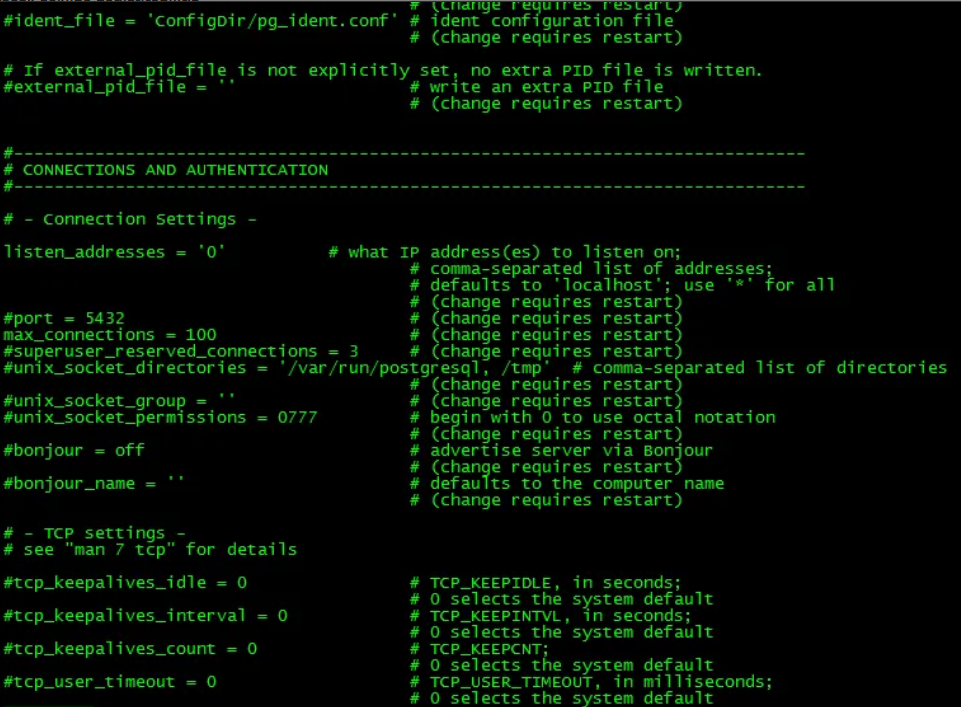

Sample postgresql.conf file

An example postgresql.conf file is as follows, as you can guess, a parameter is specified in each line, the equal sign between name and value is optional. Spaces are trivial, # sets the rest of the line as a comment.

NOTE: If the posgresql.conf file contains multiple entries for the same parameter, all but the last one is ignored, so the last value written in the file is valid.

Parameters set in this way provide default values for the cluster. The settings seen by active sessions will be these values unless they are overridden.

Reload postgresql.conf

When Postgresql main process receives the SIGHUP signal, the Postgresql configuration file is read again. This signal can be sent to postgresql in two ways. The postgresql.conf file can be read again with the “pg_ctl reload” command on the operating system or with the “SELECT pg_reload_conf ()” command from the psql command line tool.

The Main process propagates this signal to all running server processes, so existing sessions also adopt the new values (this happens after completing any client commands currently executing).

Alternatively, you can send the signal directly to a single server transaction. Invalid parameter settings in the configuration file are ignored (but logged) during the SIGHUP process.

1 2 3 4 5 6 7 8 9 10 11 | -bash-4.2$ /usr/pgsql-12/bin/pg_ctl reload server signaled -bash-4.2$ psql psql (12.4) Type "help" for help. postgres=# select pg_reload_conf(); pg_reload_conf ---------------- t (1 row) |

postgresql.auto.conf vs postgresql.conf

Some parameters can only be set at server startup; For these parameters, “# (change requires restart)” is written in the comment section of the postgresql.conf file.

In addition to Postgresql.conf, it includes a postgresql.auto.conf file, which has the same format as postgresql.conf but is intended to be edited automatically, not manually. This file contains the settings provided with the ALTER SYSTEM command. Settings in postgresql.auto.conf override the contents of postgresql.conf. The postgresql.auto.conf file is located in the $PGDATA directory.

NOTE: Pg_settings system view provides access to the server runtime parameters. It is essentially an alternative interface to SHOW and SET commands.

1 2 | select * from setting where name = 'configuration_parameter'; UPDATE pg_settings SET setting = reset_val WHERE name = 'configuration_parameter'; |

PostgreSQL provides several features to subdivide complex postgresql.conf files. These features are particularly useful when managing multiple servers with related but not identical configurations.

1 2 3 | include 'shared.conf' include 'memory.conf' include 'server.conf' |

postgresql.conf parameters

Since there are too many parameters in the Postgresql.conf file, this section of the article is divided into specific titles for file classification purposes; There are titles such as File Locations, Resource Consumption, Connections and Authentication etc. After this part of the article, I will briefly explain the important parameters in postgresql and make recommendations about the setting of these parameters in my opinion. Of course these settings could be different according to your environment and workload.

Connections

listen_addresses (string)

Specifies the TCP/IP Address (s) that the server will listen for connections from client applications.

If we are going to specify more than one address, we can give the hostname and ip numbers by separating them with a comma (,).

* Corresponds to all IP interfaces available.

0.0.0 allows listening on all IPv4 addresses.

:: allows listening on all IPv6 addresses.

If the list is empty, the server will not listen to any IP interface at all. In this case, only Unix domain sockets can be used to connect to it. The default value is localhost, which allows only local TCP / IP “loopback” connections to be made.

port (integer)

Postgresql serves from port 5432 by default. All IP addresses listened to by the server serve over the same port. When more than one postgresql instance is used on the same server, a different port must be used for each cluster/instance/postgresql.

max_connections (integer)

It is used to specify the maximum number of connections that can be opened simultaneously in the database. By default its value is 100. In standby servers, this parameter should be set the same or higher than the parameter value in the master server.

Before increasing your connection count, you should consider whether you really need an increased connection limit. After all, our machine has a limited amount of RAM. If you allow more connections, you can allocate less RAM to each connection. Otherwise, you are in danger of using swaps.

The private memory available for each process is limited to work_mem while query is running. So if you use a high value for max_connections, you will need to set this parameter to low. work_mem has a direct impact on query performance. Usually, a well-written app doesn’t need a lot of connection. If you have an application that needs a lot of connections, you should consider using a tool like pg_bouncer that can handle connections effectively for you.

superuser_reserved_connections (integer)

It determines how many connections out of the number of connections that are set by the max_connections parameter will be reserved for users in the super user role. It is 3 by default. Normal users can open a maximum of 97 connections on the system when the max_connections parameter is set to 100 and the superuser_reserved_connections parameter to 3.

The point to note here is that the postgres user who comes as super user by default should not be used in the application. In other words, if we use the postgres user in our application, this parameter has no meaning.

tcp_keepalives_idle (integer)

It sets a time limit for connections without any network activity between the operating system and the client. If this value is specified without units, it is taken in seconds. A value of 0 (default) selects the operating system default.

tcp_keepalives_interval (integer)

Specifies the period of time that the TCP keepalive message not accepted by the client will be retransmitted. If this value is specified without units, it is taken in seconds. A value of 0 (default) selects the operating system default.

tcp_keepalives_count (integer)

Specifies the number of TCP keepalive messages that can be lost before the server is considered disconnected from the client. A value of 0 (default) selects the operating system default.

tcp_user_timeout (integer)

Specifies the amount of time that transmitted data can remain unconfirmed before the TCP connection is forcibly closed. If this value is specified without units, it is taken in milliseconds. A value of 0 (the default) selects the default operating system.

Authentications

authentication_timeout (integer)

It is the parameter that enables the connection request to be rejected if the connection process of the user to the database is not completed in the expected time. It is set in seconds and its default value is 1 minute.

password_encryption (enum)

When a password is specified in CREATE ROLE or ALTER ROLE, this parameter determines the algorithm to be used to encrypt the password. By default it is md5.

db_user_namespace (boolean)

It is used to create independent users in databases. Default value is off. If its value is on, a custom user can be created for a database using the username @ dbname syntax.

ssl (boolean)

It is the parameter that activates the SSL connection method. By default, its value is off.

Using an SSL certificate encrypts our connection. In this way, our connection information in our package is safe.

ssl_ca_file

Specifies the name of the file containing the SSL server certificate authority (CA). The default is blank, ie the CA file is not uploaded and client certificate verification is not performed.

Resource Consumption

shared_buffers

It is the parameter that allows setting the area to be used as shared buffer in the memory. It is 128 MB by default. If you have a database dedicated server with 1 GB or more of RAM, the reasonable initial value for shared_buffers is 25% of the memory on your system. There are workloads for shared_buffers where even greater settings are effective, but since PostgreSQL is also based on the operating system cache structure, allocating more than 40% of the RAM to shared_buffers will not work better than a smaller shared_buffers. Larger settings for shared_buffers usually require a corresponding increase in max_wal_size.

Recommendation: I wanted to rewrite it because it is important; PostgreSQL uses the kernel buffer area in the operating system as well as its own buffer area, that is, the data thrown from the shared_buffers falls into the operating system cache and receives it from the postgresql operating system cache if needed. This means that data is stored twice in memory, first in the PostgreSQL buffer and then in the kernel buffer (Unlike other databases, PostgreSQL does not provide IO directly), this is called double buffering. After highlighting this part, coming to the suggestion, the default value of Shared_buffer is set to a very low value and you cannot get much benefit from it. It is low because some machines and operating systems do not support higher values. However, on most modern machines you need to increase this value for optimum performance. The recommended value is 25% of your total machine RAM. You should try lower and higher values because in some cases we will get good performance with a setting above 25%. The configuration really depends on your machine and operating data set.

huge_pages (enum)

It is the parameter that enables to set huge pages usage options in the shared memory area. It can take the value “Try, on or off”. By default it is Try. It tries to use the Huge pages, if not, there will be no problem in the system operation. If it cannot be used when set as on, the database cannot be started. When set to Off, it is not used at all.

Recommendation: The use of huge pages increases performance by consuming less CPU time for small table pages and memory management, so I recommend using huge pages by adjusting the necessary settings in the operating system.

temp_buffers (integer)

It is the parameter that determines the value of the maximum temporary buffer space to be used for each session. These are the session specific buffer spaces used only to access temporary tables. Default value is 8MB.

work_mem (integer)

Sets the maximum amount of memory to be used by a query operation (such as a sort or hash table) before writing to temporary disk files. The default is four megabytes (4MB). Sorting operations are used for ORDER BY, DISTINCT and merge join. Used for processing hash tables, hash joins, hash-based aggregation, hash-based processing, and IN subqueries. Several active sessions may be doing this type of action at the same time. Therefore, the total memory used may be more than work_mem. This fact should be kept in mind when choosing the value.

Recommendation: Memory operations are much faster than on disk, but setting too high a value may cause a memory bottleneck for your production media. Setting this parameter globally may cause very high memory usage. Therefore, it is highly recommended that you change this at the session level.

I want to talk about another approach; It is suggested to use the following formula to determine the work_mem value. If a connection pooling tool is used and the max connection number can be determined, it may make sense to use this formula.

1 | Total RAM * 0.25 / max_connections |

Note: We can also directly assign work_mem to a role;

1 | alter user test set work_mem='4GB'; |

maintenance_work_mem (integer)

Specifies the maximum amount of memory to be used by maintenance operations such as VACUUM, CREATE INDEX, and ALTER TABLE ADD FOREIGN KEY. It is 64 megabytes (64MB) by default. Since only one of these processes can be executed by a session at the same time, and most of them do not run simultaneously in normal setup, it is safe to set this value to a value much larger than work_mem.

temp_file_limit

It limits the total size of all temporary files used by each process. -1 (default) means no limit.

Recommendation: I think it is useful to determine this value by monitoring database processes. For example, I witnessed that the disk was full due to an accidental 100 billion sort operation from the application.

bgwriter_delay (integer)

It specifies how long the background writer process should wait between successive runs.

Recommendation: This parameter can be dropped at any time, but my general approach is to change the parameters related to bgwriter if you are facing a problem by monitoring the chechkpoint increments or pg_stat_bgwriter.

bgwriter_lru_maxpages (integer)

Specifies the maximum number of buffers to be written by the process in each run of bgwiter.

bgwriter_lru_multiplier (floating point)

The number of dirty buffers written in each round depends on the number of new buffers needed by the server processes in the last rounds. The average last need is multiplied by the bgwriter_lru_multiplier to arrive at an estimate of the number of buffers that will be needed in the next round. So if we set this value to 3.0, the number of dirty buffers it can write each time will be bgwriter_lru_maxpages * 3. By default, this value is 2.0.

effective_io_concurrency (integer)

Sets the number of concurrent disk I / O operations that PostgreSQL expects to be executed simultaneously. Increasing this value will increase the number of I / O processes that any PostgreSQL session attempts to start in parallel. The default value is 1, we can use 0 to disable it, at the same time, this parameter can take a value between 1-1000.

Recommendation: For magnetic drives, this setting is the number of drives in the RAID 0 or RAID 1 miror structure used for the database. For example, if you have 10 disks in RAID 1 structure, this parameter should be 5, or if you have 10 disks in RAID 0, this value should be 10. A higher value than necessary to keep the disks busy will only cause extra CPU overhead. SSDs and other memory-based storage can often handle many simultaneous requests, so the best value could be hundreds.

max_worker_processes (integer)

Sets the maximum number of background processes that the system can support. Default is 8. When running a standby server, you must set this parameter to the same or higher value than the primary server. Otherwise queries will not be allowed on the standby server. As a suggestion, I cannot say that the value should be this, but we can round the number that corresponds to 60% -70% of your total CPU and use this value.

max_parallel_workers_per_gather (integer)

The maximum number of workers that the Gather or GatherMerge node can use. It should be set equal to max_worker_processes as suggestion.

max_parallel_maintenance_workers (integer)

The maximum number of processes to be used in maintenance operations such as CREATE INDEX, vacuum.

max_parallel_workers (integer)

Sets the maximum number of workers that the system can support for parallel operations. The default value is 8. When increasing or decreasing this value, also consider adjusting max_parallel_maintenance_workers and max_parallel_workers_per_gather. Also note that a setting higher than max_worker_processes for this value will have no effect. Because parallel workers receive from the worker pool created by this setting.

LOGGING

log_statement (enum)

Checks which SQL queries are logged. Valid values are none (off), ddl, mod, and all (all queries). By default it is none. As a suggestion, I recommend leaving it as ddl.

log_min_duration_statement (integer)

If the statement is run for at least the specified time, it will cause the duration of each completed statement to be logged. For example, if you set it to 250ms, all SQL queries running 250ms or longer will be logged. Enabling this parameter can be helpful in tracking non-optimized queries in your applications. As a suggestion; By setting this parameter to a few minutes, you can fix problematic queries and then decrease this value. Overrides log_min_duration_sample.

log_checkpoints (boolean)

It enables checkpoint and restartpoint logs to be recorded in the server log. Some statistics such as the number of buffers written and the time taken to write them are included in the log messages. Default is off.

log_lock_waits (boolean)

This parameter checks whether the log message is generated when a session waits longer than the deadlock_timeout time to receive lock. This is useful in determining whether log wait times cause poor performance. Default is off. If I have to make suggestions, I think it is useful to open this parameter.

log_destination (string)

PostgreSQL supports several methods for logging server messages, including “stderr, csvlog, and syslog”. The default value is stderr.

logging_collector (boolean)

This parameter enables the log collector, a background process that captures log messages sent to stderr and redirects them to log files.

Note: log collector is designed to never lose messages. This means that in case of overload, server operations can be blocked when attempting to send additional log messages when the collector is left behind. Conversely, syslog prefers to leave messages if it cannot write, which means that in such cases it may not be able to log some messages, but will not block the rest of the system.

log_directory (string)

When the logging_collector is enabled, this parameter determines the directory where the log files will be created. By default it is the log directory under $PGDATA.

log_filename (string)

When the logging_collector is enabled, this parameter sets the filenames of the generated log files. The default is postgresql-%Y-%m-%d_%H%M%S.log. We use the arguments of the date command in linux for naming here.

log_file_mode (integer)

On Unix systems, this parameter sets the permissions of log files when logging_collector is enabled. The parameter value is expected to be a numeric mode specified in the format accepted by chmod and umask system calls. The default permissions are 0600, which means only the host can read or write log files.

log_rotation_age (integer)

When logging_collector is enabled, this parameter determines the maximum lifetime of a single log file, and then a new log file is created. If this value is specified without units, it is taken as minutes. The default is 24 hours. You can set it to zero to disable the time-based generation of new log files.

log_rotation_size (integer)

When logging_collector is enabled, this parameter determines the maximum size of a single log file. After this amount of data is sent to a log file, a new log file will be created. If this value is specified without units, it is taken in kilobytes. The default value is 10 megabytes. You can set it to zero to disable the creation of new log files based on size.

log_truncate_on_rotation (boolean)

When logging_collector is enabled, this parameter causes PostgreSQL to truncate (overwrite) instead of appending it to any existing log file with the same name.

event_source (string)

When event_source log logging is enabled, this parameter specifies the program name used to identify PostgreSQL messages in the log. The default is PostgreSQL.

log_min_messages (enum)

Controls which message levels are written to the server log. Valid values are DEBUG5, DEBUG4, DEBUG3, DEBUG2, DEBUG1, INFO, NOTICE, WARNING, ERROR, LOG, FATAL and PANIC. Each level contains all the levels that follow it. The later the level, the fewer messages will be sent to the log. Default is WARNING.

| Severity | Usage | syslog | eventlog |

| DEBUG1 .. DEBUG5 | It provides successively more detailed information for use by developers. | DEBUG | INFORMATION |

| INFO | Provides information indirectly requested by the user, eg output from VACUUM VERBOSE. | INFO | INFORMATION |

| NOTICE | Provides information that can help users, such as a notification that long identifiers have been truncated. | NOTICE | INFORMATION |

| WARNING | Provides warnings of possible problems, for example COMMIT outside of a transaction block. | NOTICE | WARNING |

| ERROR | Reports an error that caused the current command to be aborted. | WARNING | ERROR |

| LOG | It reports information that DBAs are interested in, for example checkpoint activity. | INFO | INFORMATION |

| FATAL | Reports an error that caused the current session to be canceled. | ERR | ERROR |

| PANIC | Reports an error that caused all database sessions to be canceled. | CRIT | ERROR |

log_min_error_statement (enum)

Controls that the SQL queries that cause the error condition will be recorded in the server log. The current SQL command is included in the log entry for any message with the specified severity rating or higher. Valid values are DEBUG5, DEBUG4, DEBUG3, DEBUG2, DEBUG1, INFO, NOTICE, WARNING, ERROR, LOG, FATAL and PANIC. The default is ERROR, which means any statements that cause errors, log messages, fatal errors or panic will be logged.

log_min_duration_sample (integer)

Allows sampling of the duration of completed statements that run for at least the specified time period. This generates the same type of log entries as log_min_duration_statement.

log_connections (boolean)

In addition to successfully completing client authentication, it causes every connection attempt made with the server to be logged. Default is off.

log_disconnections (boolean)

Causes session terminations to be logged. The log output provides similar information as log_connections and also the session duration. Default is off.

log_line_prefix (string)

This is a printf-style string that appears at the beginning of each log line. It is mostly used for the needs of log management tools. As a suggestion, if I need to use it, I usually set this parameter as follows.

1 | log_line_prefix='%t:%r:%u@%d:[%p]: ' |

| Escape | Effect | Session only |

| %a | Application name | yes |

| %u | User name | yes |

| %d | Database name | yes |

| %r | Remote host name or IP address, and remote port | yes |

| %h | Remote host name or IP address | yes |

| %b | Backend type | no |

| %p | Process ID | no |

| %t | Time stamp without milliseconds | no |

| %m | Time stamp with milliseconds | no |

| %n | Time stamp with milliseconds (as a Unix epoch) | no |

| %i | Command tag: type of session’s current command | yes |

| %e | SQLSTATE error code | no |

| %c | Session ID: see below | no |

| %l | Number of the log line for each session or process, starting at 1 | no |

| %s | Process start time stamp | no |

| %v | Virtual transaction ID (backendID/localXID) | no |

| %x | Transaction ID (0 if none is assigned) | no |

| %q | Produces no output, but tells non-session processes to stop at this point in the string; ignored by session processes | no |

| %% | Literal % | no |

log_replication_commands (boolean)

Her replication komutunun sunucuya kaydedilmesine neden olur. Varsayılan değer kapalı.

log_temp_files (integer)

Temp dosya adlarının ve boyutlarının kaydedilmesini kontrol eder. Sıfır değeri, tüm temp dosya bilgilerini loga kaydederken, pozitif değerler yalnızca boyutu belirtilen veri miktarından büyük veya bu miktara eşit olan dosyaları loga kaydeder. Varsayılan ayar, böyle bir log kaydını devre dışı bırakan -1’dir.

log_timezone (string)

Sunucu loguna yazılan timezone için kullanılan saat dilimini ayarlar. TimeZone’dan farklı olarak, bu değer cluster çapındadır. varsayılan GMT’dir, ancak bu genellikle initdb aşamaında bu ayar değiştirilir; initdb, sistem ortamına karşılık gelen bir ayar kuracaktır.

Run-time Statistics

These parameters control the server-wide statistics collection features. When statistics collection is enabled, the generated data can be accessed via the pg_stat and pg_statio family of system views.

stats_temp_directory (string)

Sets the directory where temporary statistics data will be stored. The default is pg_stat_tmp. Pointing this into a RAM-based file system reduces physical I / O requirements and can increase performance. As a suggestion, since data is frequently written to this file and it will not cause much trouble in case of data loss, I think you should definitely create a RAM-based file with the tmpfs file system and change this parameter.

track_activities (boolean)

It provides gathering information about each session’s command currently running and when it starts running. This parameter is enabled by default.

track_activity_query_size (integer)

Specifies the amount of memory allocated for the Pg_stat_activity.query (to store the text of the currently executed command for each active session). The default value is 1024 bytes.

track_counts (boolean)

Provides collection of statistics about database activity. This parameter is enabled by default because the autovacuum daemon needs the information collected.

track_io_timing (boolean)

Can be enabled to track database I / O timing. This parameter is off by default because it will repeatedly query the operating system for the current time, which can cause some significant overhead. You can use the pg_test_timing tool to measure the timing overhead on your system. We can also display the I / O time in pg_stat_database view, in EXPLAIN output when BUFFERS option is used, and by pg_stat_statements.

track_functions (enum)

Provides tracking of function call numbers and runtime.

Client Connection Defaults

client_min_messages (enum)

Controls which message levels will be sent to the client. Valid values are DEBUG5, DEBUG4, DEBUG3, DEBUG2, DEBUG1, LOG, NOTICE, WARNING, and ERROR. The default is NOTICE.

search_path (string)

This variable specifies the order in which schemas are searched if the schema is not specified when accessing an object (table, data type, function, etc.). When there are objects with the same names in different schemas, the first object in the search path is used. We can access an object that is not found in any of the schema in the search path, only by using the schema it contains. The default value of this parameter is “$ user”, public.

row_security (boolean)

This variable checks if an error occurs instead of applying row level security policy. When set to ON, policies are normally enforced. When set to OFF, queries that apply at least one security policy will throw an error. Default is ON.

default_table_access_method (string)

This parameter specifies the default table access method to use when creating tables or materialized views, unless the CREATE command explicitly specifies an access method, or when SELECT… INTO is used. The default is heap.

default_tablespace (string)

This variable specifies the default tablespace where objects (tables and indexes) will be created when the CREATE command does not explicitly specify a tablespace.

temp_tablespaces (string)

This variable specifies the default temp tablespace where objects (tables and indexes) will be created when the CREATE command does not explicitly specify a tablespace.

default_transaction_isolation (enum)

Each SQL transaction has an isolation level, which can be “read uncommitted”, “read committed”, “repeatable read”, or “serializable”. This parameter checks the default isolation level of each new transaction. The default is “read committed”.

default_transaction_read_only (boolean)

Read-only transactions cannot change non-temp tables. This parameter checks the default read-only state of each new transaction. Default is off.

session_replication_role (enum)

Controls the triggering of replication-related triggers and rules for the current session. Possible values are origin (default), replica and local.

statement_timeout (integer)

Cancels any query taking longer than the specified time. A value of zero (default) disables the timeout.

lock_timeout (integer)

Cancels any query that waits longer than the specified time when trying to get a lock on a table, index, row, or other database object. A value of zero (default) disables the timeout.

idle_in_transaction_session_timeout (integer)

Terminates the session for an open transaction that has been idle longer than the specified time. A value of zero (default) disables the timeout.

DateStyle (string)

Determines the display format for date and time values. For historical reasons.

TimeZone (string)

Sets the time zone to view and interpret the time zone. The built-in default is GMT, but in the initdb phase, it will set a setting corresponding to the system environment.

lc_messages (string)

Sets the language in which messages are displayed. Acceptable values depend on the system. If this variable is set to an empty string (the default value), the value is inherited from the server’s env environment, depending on the system.

Other Parameters

deadlock_timeout (integer)

This parameter is the time to wait on a lock before checking whether it has a lock state. Lock control is relatively expensive, so the server doesn’t execute it every time it waits for a lock. Increasing this value reduces the time wasted on unnecessary lock checks, but slows down the detection of true lock errors. The default value is one second (1s).

wal_segment_size (integer)

Informs the size of WAL segments. The default value is 16MB.

wal_block_size (integer)

Informs the size of the WAL disk block. It is determined by the value of XLOG_BLCKSZ when creating the server. The default value is 8192 bytes.

data_checksums (boolean)

Informs whether the data checksum is enabled for the Cluster. As a suggestion, I must say that the checksum must be active in your cluster.

checkpoint_timeout

This is a timeout value that can be set in seconds (default), minutes, or one hour. it is 5 minutes by default. As a suggestion, setting this value should be based on the intended use of your database. While it can be increased up to 1 day in OLAP type systems, values such as 5-10 minutes can be selected in OLTP systems.

checkpoint_completion_target (integer)

Usually, it is a parameter that makes PostgreSQL try to write data slower. Usually you have checkpoint_timeout set to 5 minutes. (if you haven’t changed it) and default checkpoint_completion_target is 0.5. This means PostgreSQL will try to make the checkpoint take 2.5 minutes to reduce the I / O load.

For example, we have 100GB of data to be written to files. And my disk is capable of writing 1 GB per second. When doing a normal checkpoint, it causes our writing capacity to be used up to 100% for 100 seconds to write data. However – when checkpoint_completion_target is set to 0.5 – PostgreSQL tries to write data in 2.5 minutes, so it doesn’t use all of our write capacity. (Uses ((100*1024)/(2,5*60)=682 MB/s). As a suggestion, I suggest using 0.7 for this parameter.

max_wal_size (integer)

The maximum size the WAL is allowed to grow during automatic checkpoints. Default is 1 GB. As a suggestion, I recommend that you increase this value if there is space on this disk.

min_wal_size (integer)

As long as WAL disk usage falls below this setting, old WAL files are always recycled at the checkpoint point, rather than being removed for future use. It can be used to ensure that enough WAL space is allocated to handle spikes in WAL usage, for example when running large batches. It is 80 MB by default.

seq_page_cost (floating point)

Sets the planner’s estimate of the cost of the sequential reading. Default value is 1.0

random_page_cost (floating point)

Sets the planner’s estimate of the cost of a disk page that is not fetched as Sequential. Default is 4.0.

Decreasing this value according to the seq_page_cost value will cause the system to prefer index scans.

Increasing it will make index scans appear more expensive.

Recommendation: Random access to mechanical disk storage is normally much more expensive than four times sequential access. However, since most random accesses to the disk are assumed to be caches, a lower default is used (4.0).

The default value is 40 times slower than random access. It can be thought of as expecting 90% of random readings to be cached.

If you believe that the 90% cache ratio for your workload is a false assumption, you can increase random_page_cost to better reflect the actual cost of random storage readings. Accordingly, if there is a possibility that your data is fully cached, for example, if the database is smaller than the total server memory, it may be appropriate to lower random_page_cost. Or, if you have a fast I/O structure in random access, such as SSD, this value can be reduced somewhat.

restart_after_crash (boolean)

When set to on, the default value, PostgreSQL will restart automatically after a backend crash. Leaving this value open is normally the best way to maximize the availability of the database. However, in some cases, such as when PostgreSQL is invoked by clusterware, disabling automatic startup can be useful so that the cluster software can take control and take actions it deems appropriate.

data_sync_retry (boolean)

When set to off, the default value, PostgreSQL will generate a PANIC-level error if it is unable to dump dirty data into the file system. This causes the database server to crash. If set to On, PostgreSQL will report an error instead, but will continue to run so the data cleanup can be retried at the next checkpoint.

block_size (integer)

Informs the size of a disk block. It is determined by the value of BLCKSZ while creating the server. The default value is 8192 bytes.

data_directory_mode (integer)

In Unix systems, this parameter informs the permissions of the data directory originally defined with (data_directory).

segment_size (integer)

Indicates the number of blocks (pages) that can be stored in a file segment. It is determined by the value of RELSEG_SIZE when creating the server. The maximum size of the segment file in bytes equals segment_size multiplied by block_size; it is 1GB by default.

server_version (string)

Reports the version number of the server. It is determined by the value PG_VERSION when creating the server.

![]()

Database Tutorials MSSQL, Oracle, PostgreSQL, MySQL, MariaDB, DB2, Sybase, Teradata, Big Data, NOSQL, MongoDB, Couchbase, Cassandra, Windows, Linux

Database Tutorials MSSQL, Oracle, PostgreSQL, MySQL, MariaDB, DB2, Sybase, Teradata, Big Data, NOSQL, MongoDB, Couchbase, Cassandra, Windows, Linux