General Concepts:

First of all, the database has two files by default.

These are data file with mdf extension (called Primary Data File) and transaction log files with extension ldf.

There is a third file type which is optional with the nDF extension. It is called Secondary Data file.

If you create a data file other than the mdf extension data file, the extension will be ndf.

The Primary Data File contains information about the database and other files in the database.

For example, if you select mdf file while attaching, sql server will automatically fetch other files.

You can find the details of the detach and attach operations in the article titled “How To Change The Disk Of Database Files“.

If you do not create a secondary data file, all data will be kept in the Primary Data File.

Primary Data File is located in the PRIMARY File Group which is the default file group.

All system tables are under PRIMARY file group.

If you do not create a File Group other than the PRIMARY File Group, the secondary data files that you create will automatically be created under the PRIMARY file group.

So each data file has to be in a file group.

File Groups are used to allocate space from the operating system, to facilitate management, and to group files.

I will explain the details later in the article.

Preparation:

Before creating a database, we need to ask the people who want to create a database the following questions.

Database Name:

Database Collation Information:

Estimated size after 2 years:

How many people will use it:

By specifying Collation you have determined how the data will be sorted and compared.

For example, does letter a and letter A have the same meaning?

“Estimated size after 2 years” and “How many people will use it” are the ones that play a critical role in database design.

For example, the database will be 5 TB after 2 years, and 50 thousand people use the database at the same time.

If you create database with default options, you will have very serious performance problems.

Create The Database:

Let’s start by creating the database with default settings for small databases.

The concept of smallness varies according to the person.

I’m doing database creation with default settings for databases that will be smaller than 500 GB.

There is another design I use for between 500 GB and 2 TB.

There is another design I use for 2 TB and above.

We recommend that you do the same for maximum performance.

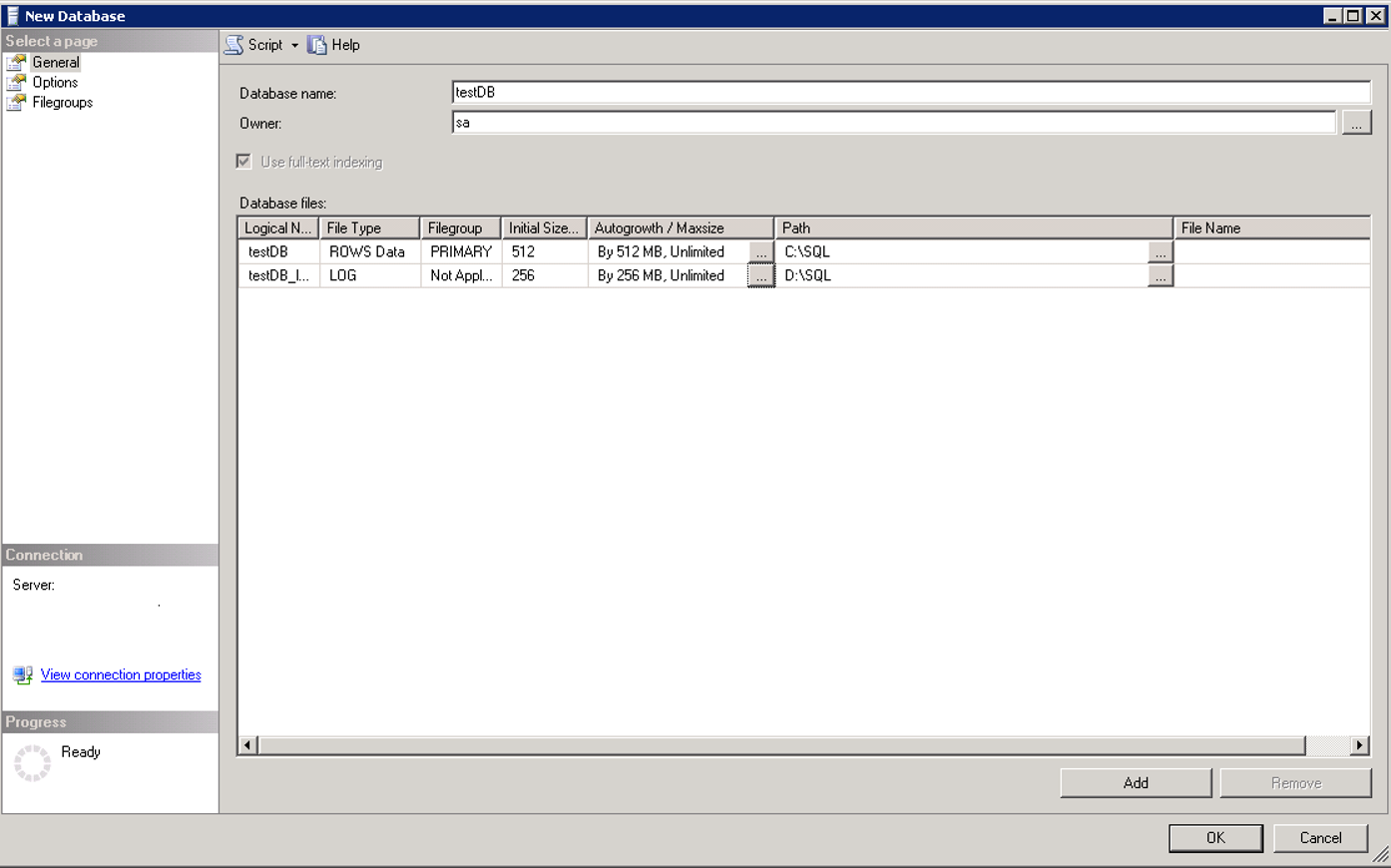

To create a database with default settings for databases with sizes between 0 and 500 GB:

Right-click on the Databases tab on the SSMS and select New Database.

In the Database Name section we give your a name to our database.

In the Owner section, you can enter the login information of the owner of the database.

If you do not want to grant owner authority to the person requesting the database, you can type sa and pass it.

According to the name you wrote in the Database name section, it will determine the logical name itself.

I set the Initial Size information to 512 mb for the data file and 256 mb for the log file.

These default values come from the model database.

If you apply the settings you use globally in the model database, you do not have to configure them every time.

You can find detailed information on my article “SQL Server System Databases“.

It is very important that Autogrowth is not set to%.

For example, in some systems, “Initial size” is 5 mb.

The database creator does not increase the initial size here.

And the auto growth setting is 10%. Consider that the autogrowth of the log file is set in this way.

This means: Whenever the size of the Transaction Log file grows, it will grow by 10% of 5 MB.

In this way, the vlf count in the log files will be too much and this will affect performance very seriously.

You can find detailed information about Vlf count in the article “Vlf(Virtual Log File) Count“.

After setting the Initial Size and Autogrowth, we will determine the location of the data file and the log file of the database from the Path section.

Putting data files and log files on different disks will improve performance.

Even if you have the possibility, make sure that the disk where the log file is located is RAID 10 in order to be faster.

Because you can get more IO by spreading data files to file groups and multiple disks by creating more than one file.

But even if you create the log file in more than one number, it is limited to the IO speed of the disk because it writes to just one at same time.

I would also recommend that you put your log file on the SSD if you have the possibility.

It is very important that you know whether you need it.

If you do not need it, such a cost will be unnecessary.

For example, if your wait types is usually WRITE LOG, this will mean that your log file is running slow on the disk.

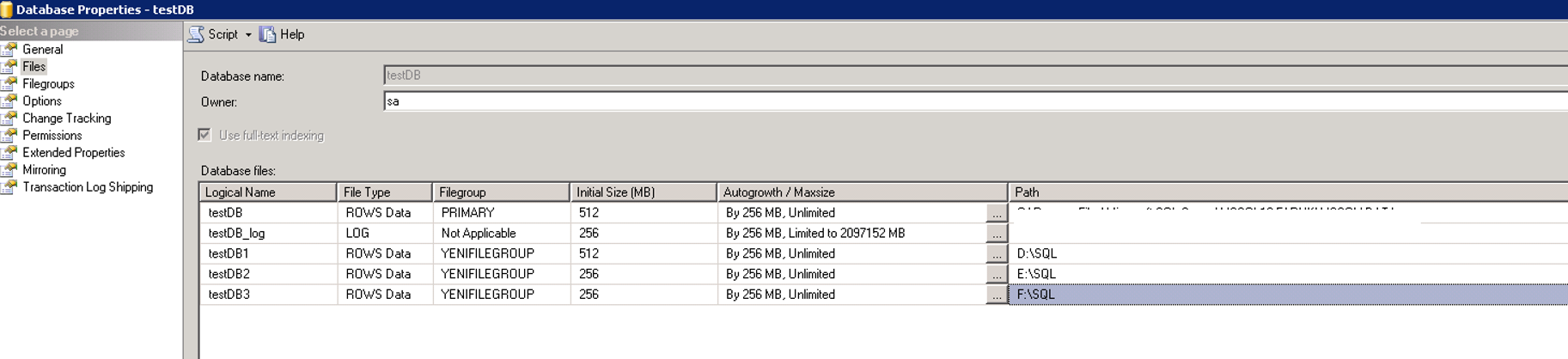

To create a database for databases with sizes between 500GB and 2TB:

As mentioned above, the database grows by using the primary file under the primary file group by default.

However, according to my experience, when the size of the database goes up to 500 GB, IO capacity of single disk is used on a single file, so performance problems are starting to happen.

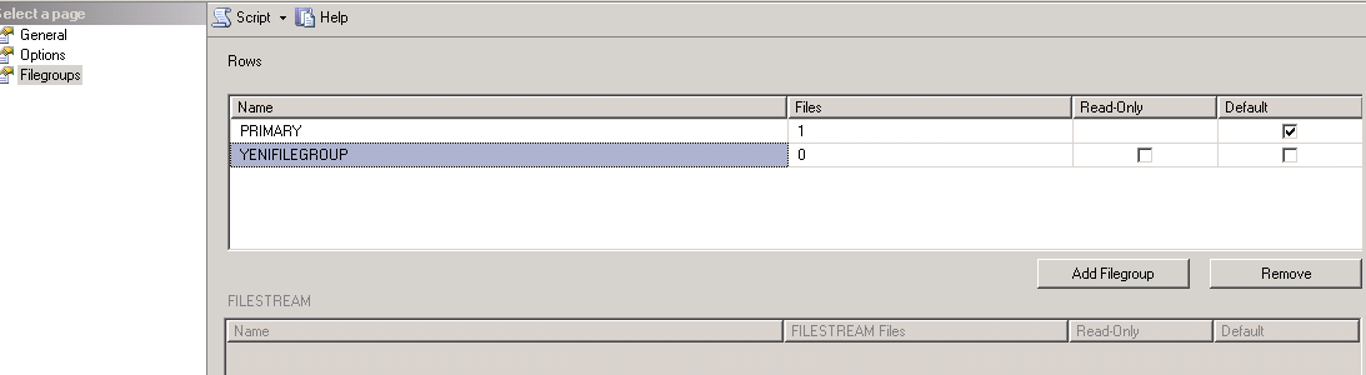

Since the system tables are in the primary file group, we need to create a new file group instead of adding a new file to the Primary File Group.

We should create files as many as the number of disks we have.

As you will see below, we right click on the database and select the properties and click on add file group in the file group tab.

We give the name of the file group on the name part and click ok.

You shoul read the article “What is Database File Group And How To Recreate Large Tables In Another File Group” for detailed information about this topic.

We are adding new files into this new filegroup as below.

If you want the newly created tables to be automatically defined on the newly created file group instead of the PRIMARY FileGroup, then after creating the files, we will go to the File group tab and select the file group that we just created as the defaulf file group.

For databases that will grow up to 2 TB, it is beneficial to have at least 6 disks and at least 6 files.

To create a database for databases with a size larger than 2 TB:

First of all you have to ask the application developers which tables will grow.

For example, you received an answer like this.

The database size will be 5 TB.

And the biggest tables will be as follows.

Table X -> 1 TB

Table Y -> 700 GB

Table Z -> 500 GB

Table K -> 500 GB

Table L -> 400 GB

Table M -> 400 GB

Later, we need to identify the columns of these tables that are most suitable for partitioning.

And we need to partition these tables according to the needs of the application.

You can create separate file groups for each of the above tables, define separate files, and partition each one separately.

Or,

You can create a single file group for all of them in a single Partition Schema. This will depend on your needs.

You can find details about Partition in the article named “How To Create Partition On SQL Server” name.

![]()

Database Tutorials MSSQL, Oracle, PostgreSQL, MySQL, MariaDB, DB2, Sybase, Teradata, Big Data, NOSQL, MongoDB, Couchbase, Cassandra, Windows, Linux

Database Tutorials MSSQL, Oracle, PostgreSQL, MySQL, MariaDB, DB2, Sybase, Teradata, Big Data, NOSQL, MongoDB, Couchbase, Cassandra, Windows, Linux